Why Human-in-the-Loop AI Isn't Slowing You Down. It's Your Competitive Edge

95% of AI pilots fail. The 5% that succeed aren't moving faster, they're building human-in-the-loop architecture. Here's why HITL is your competitive edge, not a bottleneck.

Ari Kopmar

6 min read

Why Human-in-the-Loop AI Isn't Slowing You Down. It's Your Competitive Edge

There's a stat making the rounds that should terrify every executive who's poured budget into an AI initiative: 95% of enterprise AI pilots fail to extract value. Not "underperform." Not "need optimization." Fail. According to Fortune's analysis of MIT research from August 2025, only 5% of organizations are actually extracting millions in value from their AI investments.[1] The rest? Billions spent, zero return.

Here's what makes this even stranger. At the same time, Deloitte reports that 34% of organizations claim they're using AI to "deeply transform" their business.[2] And VentureBeat found that 90% of individual workers are succeeding with personal AI tools.[3] So AI works for individuals but fails at enterprise scale?

That doesn't add up.

Unless the problem isn't the AI at all. The problem is how we're deploying it.

The companies in that 5% success bracket aren't moving faster than everyone else. They're not using better models or throwing more compute power at the problem. They figured out something more fundamental: where humans add leverage instead of friction. They built human-in-the-loop architecture while everyone else was chasing full autonomy.

And here's the part nobody talks about. HITL isn't slowing them down. It's their competitive edge.

Why 95% of AI Pilots Die (And It's Not What You Think)

Let me show you what's actually happening. Companies announce big AI initiatives. They run pilots that look incredible in demos. The technology works. The POC delivers results. Then everything stalls. Six months later, the pilot is still a pilot. A year later, it's quietly shelved.

Why? Most people blame the usual suspects. Bad data quality, immature technology, unrealistic ROI expectations. And sure, those factors matter. But they miss the real killer: deployment architecture.

Individual workers succeed with AI at a 90% rate because they use it as a copilot. They stay in control. They decide when to use it, how to apply the output, whether to trust the recommendation. There's an inherent human-in-the-loop structure built into how people naturally work with these tools.

Enterprises fail because they're trying to build the opposite. Fully autonomous systems that make decisions without human involvement. They treat AI like a self-driving car when they should be building trains on tracks.

CIO.com nailed it in January 2026: "AI isn't failing because it's immature. It's failing because companies overestimate readiness, expect magic ROI, ignore data quality and treat autonomy as a goal rather than a spectrum."[4]

Autonomy as a spectrum. That's the key insight most organizations miss.

Why Governed AI Deploys Faster Than 'Autonomous' AI

Here's the counterintuitive truth that's driving competitive advantage right now: Organizations that embed governance early actually scale faster. Not slower. Faster.

The World Economic Forum published research in January 2026 showing that "organizations that embed AI governance early can avoid fragmentation, duplication, and risk, enabling AI to scale faster and reliably."[5] This flips the conventional wisdom on its head. We've been taught that governance means committees, approvals, bureaucracy. All the things that slow you down.

But in practice? Companies with human-in-the-loop architecture skip what I call "pilot purgatory." They don't build something, test it, panic about compliance, tear it down, rebuild it with guardrails, retest. They build with the guardrails from day one. They know exactly where humans need to be in the loop before they write the first line of code.

Look at cybersecurity teams. Hack The Box released benchmark data in March 2026 showing that AI-augmented elite teams achieve 3-4x productivity gains.[6] Notice the language: augmented, not autonomous. These teams didn't remove humans from security operations. They strategically placed humans at decision gates while letting AI handle the high-volume, repetitive analysis.

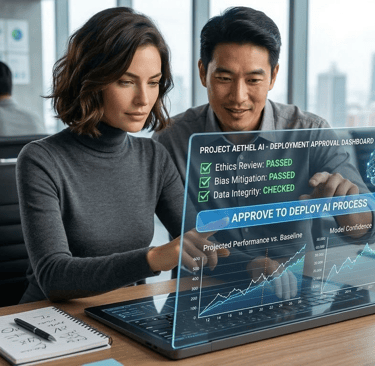

That's the architecture that works. Oracle described it perfectly in their March 2026 blog: "Deliberate placement of human oversight at specific decision points within an otherwise autonomous workflow."[7]

Not human reviews everything. That's a bottleneck. Human approves at critical junctures. That's governed autonomy.

Here's a concrete example. Think about credit approval at a bank.

AI does the heavy lifting. Pulls credit scores, income verification, calculates debt ratios. It runs your risk models and spits out recommendations. This all happens in seconds, no human needed.

But you don't let it approve loans by itself. Instead, you set up smart gates. Applications near your approval threshold? Human reviews those. Self-employed borrower with complicated financials? Human looks at it. First-time buyer with a thin credit file? Human judgment call.

Maybe 80% of applications are clear yes or clear no. AI processes those end to end. The other 20% get routed to experienced loan officers who actually know how to read between the lines.

What does this get you? You're processing applications 10x faster than manual review. Your risk management is better than fully autonomous systems because humans are catching the weird edge cases. And when compliance or legal asks questions, you've got answers: "Who approves loans?" Your loan officers. "What if AI screws up?" It literally cannot approve anything without a human in the chain.

Compare that to your competitor trying to build fully autonomous approval. They're still in month nine of their pilot, stuck in legal review, trying to figure out who's liable if the AI approves someone who defaults.

While Competitors Chase Autonomy, Winners Build Trust Architecture

There's a bigger shift happening that most companies aren't prepared for. IT Brief UK reported in March 2026 that enterprise AI agents are moving "from handy copilots to semi-autonomous operators."[8] These systems aren't just suggesting next steps anymore. They're taking actions, making decisions, initiating workflows.

This forces a question most companies aren't asking: What happens when your AI makes a decision you didn't anticipate?

If you built a fully autonomous system, you're hoping for the best and fixing damage later. If you built human-in-the-loop architecture, you already know the answer because you tested for it.

Here's why this matters competitively. HITL isn't defensive. It's not just about avoiding lawsuits or regulatory fines. It's offensive. It's what lets you deploy at scale while your competitors are stuck in pilot mode.

Think about the trust equation. When you go to your board and say "We're deploying AI agents to handle customer communications," what's the first question? Probably something like "What if it says something wrong?" or "Who's accountable if there's a problem?"

With autonomous systems, you're asking the board to trust the black box. Good luck with that. With HITL architecture, you can say "Every customer communication gets reviewed by our team before it goes out. AI drafts it, humans approve it. We get 10x productivity but maintain 100% oversight."

Which system gets board approval faster?

The same logic applies to customers, especially in high-stakes industries. Financial services, healthcare, legal. People want to know there's a human in the loop. Not because they don't trust AI, but because they don't trust purely algorithmic decisions when their money, health, or rights are on the line.

A financial advisor using AI to analyze portfolios and generate recommendations? Clients love it. Faster service, more comprehensive analysis. That same advisor saying "I just do whatever the AI tells me"? Clients run.

Human-in-the-loop becomes your trust architecture. It's not theater. It's a genuine accountability structure that makes stakeholders comfortable with AI deployment at scale.

And here's the part that creates real competitive advantage: HITL systems get better faster. Every time a human reviews an edge case and makes a decision, that becomes training data. Your AI learns from expert judgment on the exact scenarios it struggles with. Fully autonomous systems either make mistakes in production or never encounter those edge cases at all.

McKinsey put it bluntly in June 2025: "As agentic AI begins to influence decisions at scale, forward-looking organizations need to reimagine governance, trust, and operating models, or risk falling behind."[9]

The companies deploying "Wild West AI" with no governance hit walls. Regulatory scrutiny. Reputational damage. Accuracy problems that erode customer trust. They don't fail because the AI is bad. They fail because they deployed irresponsibly fast.

Companies with HITL architecture deploy sustainably fast. They build trust with every stakeholder group, which enables aggressive scaling.

Don't Choose Speed Over Smart. Choose Both.

The question isn't "How do we get humans out of the way so AI can run faster?" That's the wrong frame entirely.

The question is "Where do humans create the most leverage in our AI workflows?"

Because here's what the data actually shows: The organizations succeeding with AI aren't removing humans. They're being strategic about where humans add value versus where they create bottlenecks.

Start by mapping your AI workflows. Identify the 3-5 decision points where stakes are genuinely high. Where are the financial risks? The legal exposures? The reputational vulnerabilities? Where do edge cases show up that AI hasn't seen enough times to handle confidently? Where does maintaining the customer relationship matter more than processing speed?

Those are your HITL gates. Everything else? Let it run autonomous.

This is the architecture that separates the 5% extracting millions from the 95% extracting nothing. It's not about having better AI models or more compute power. It's about building systems that your board will approve, your legal team will sign off on, your customers will trust, and your regulators won't shut down.

The companies we work with at Blue Lion aren't choosing between speed and governance anymore. They figured out that governance is their speed advantage. While competitors are stuck rebuilding broken pilots or navigating regulatory investigations, they're scaling systems that actually work.

The future isn't autonomous AI. It's intelligently augmented AI. And the companies building that architecture today are the ones that will dominate tomorrow.

Want to see what HITL architecture looks like for your specific workflows? Let's map it out.