WHY YOUR AI STRATEGY IS BACKWARDS (AND WHAT TO DO INSTEAD)

Most AI implementation strategies fail because companies ask the wrong question first. Learn why 80% of AI projects fail and the outcome-first framework that actually works.

Ari Kopmar

6 min read

The Conference Room Ritual

The slide deck looks perfect. Your vendor just finished the demo. The AI tool predicted customer churn with 94% accuracy. It automated three weeks of data analysis into six minutes. The executive team is nodding.

Someone asks: "So what should we use this for?"

Silence.

Then someone offers: "Maybe customer service? Or... sales forecasting?"

You assign a task force. Budget gets approved. Twelve weeks later, you're still "exploring use cases."

This exact scene is playing out in thousands of companies right now. And according to RAND Corporation, 80% of them will fail.

Not because the technology doesn't work. Because they started with the wrong question.

The Tool-First Trap

Here's how most AI strategies get built:

Someone reads an article about generative AI. Or a competitor announces they're "AI-first." Or a vendor cold-emails with an impressive case study.

The executive team gets FOMO. A directive comes down: "We need an AI strategy by Q2."

So the team does what feels logical:

· Research available AI tools

· Request demos from vendors

· Compare features and pricing

· Pick the most impressive one

· Form a "center of excellence"

· Start hunting for problems to solve

Six months in, you've got:

· A Slack channel nobody uses

· Three pilots that went nowhere

· One junior employee who's now the "AI expert" (they're not)

· Leadership asking "What are we getting for this investment?"

Forbes found 95% of AI pilots fail to reach production. Gartner puts overall AI initiative failure at 85%. And AI projects fail at twice the rate of traditional IT implementations.

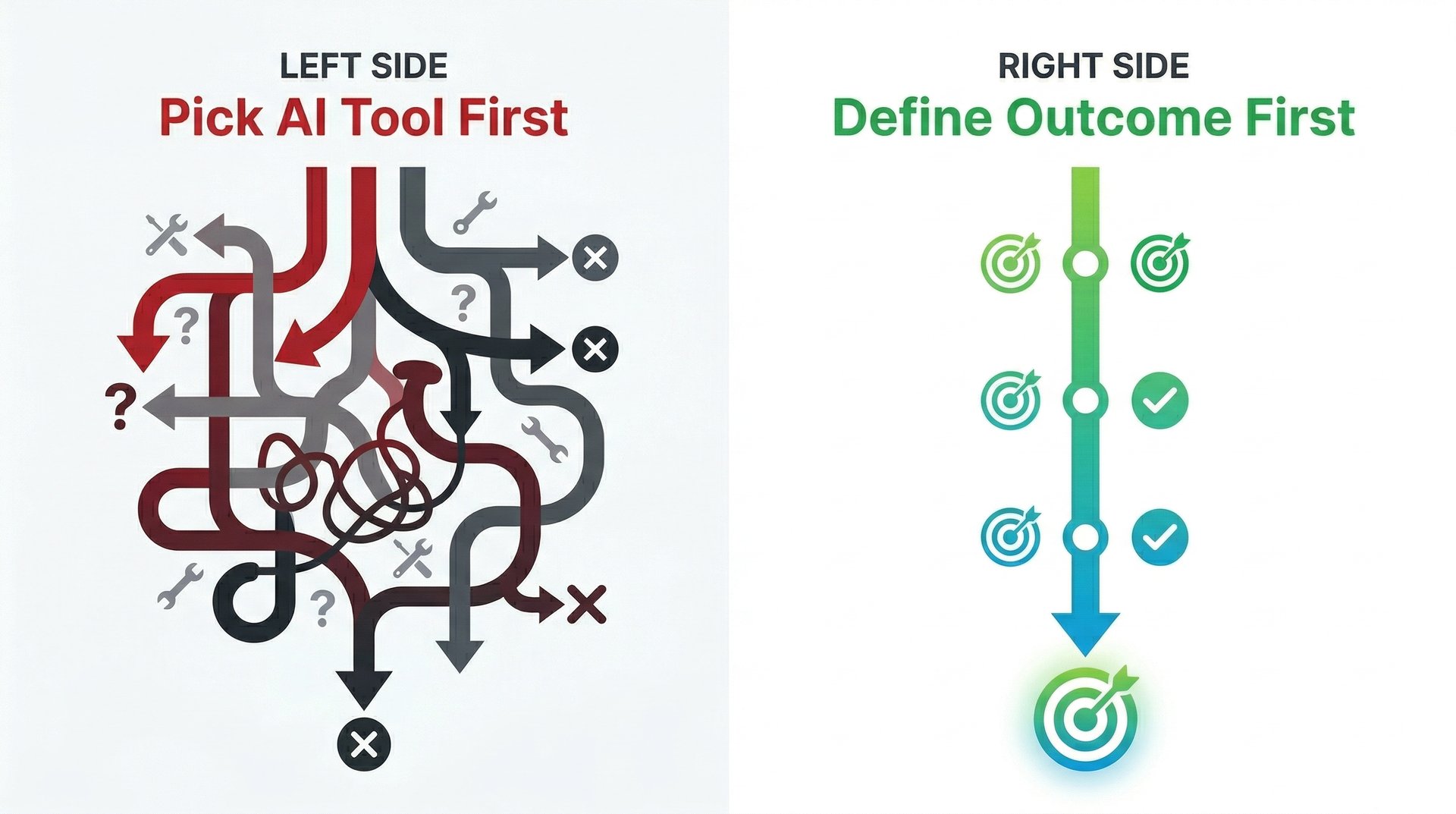

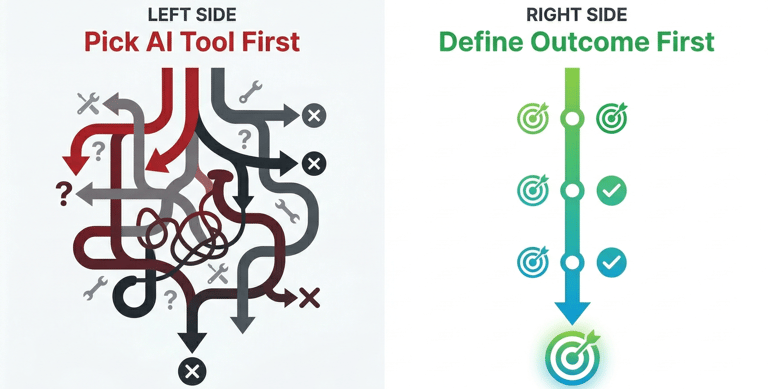

This isn't a people problem. It's not a technology problem. It's a sequencing problem.

You're starting at the end instead of the beginning.

Why Smart Companies Keep Failing

RAND Corporation spent months interviewing data scientists and ML engineers who lived through AI project failures. They identified five patterns that show up over and over:

1. Solution Looking for a Problem

"We have this incredible AI tool. Now let's find something to do with it."

That's like buying industrial kitchen equipment and then deciding you should probably open a restaurant. The logic is backwards.

One VP at a Fortune 500 told us: "We spent $400K on an AI platform before anyone asked what we were trying to accomplish. Turned out we didn't actually need it."

2. The Data Mirage

Everyone assumes their data is "pretty good." It never is.

NewVantage found 92.7% of companies cite inadequate data infrastructure as their primary blocker. But you don't discover this until you're three months into implementation and the AI keeps hallucinating nonsense.

The data is in six different systems. Half of it uses different naming conventions. Nobody documented the schema changes from 2019. The "single source of truth" is actually four sources that contradict each other.

3. Vendor Promises vs. Your Reality

The demo works flawlessly because the vendor used clean sample data in a controlled environment.

Your environment has:

· Legacy systems from 2008

· APIs that break weekly

· Security protocols that block half the integrations

· A compliance team that hasn't approved new software since March

What worked in the demo dies in your infrastructure.

4. The Endless Pilot

"Let's test it with the northeast sales team first."

Pilot runs for three months. Results are "promising but inconclusive."

"Let's extend it another quarter and add the southeast region."

Another three months. "We need more data to make a decision."

A year later, you're still piloting. Production is always "next quarter." The budget keeps renewing. Nobody wants to pull the plug because of sunk cost fallacy.

5. Nobody Actually Owns the Outcome

IT owns the implementation.

Operations owns the workflow.

The data team owns the models.

Compliance owns the approval process.

Who owns "make this actually work and deliver ROI"?

Nobody.

So when it stalls, everyone points at everyone else. The project dies in committee.

The Question Nobody's Asking

Every failed AI strategy starts the same way:

"What can AI do?"

That's the wrong question. It leads you to solutions before you've defined the problem.

The right question is:

"What specific outcome would materially change our business?"

Not "improve efficiency." Not "leverage AI." Specific outcomes with numbers:

· Reduce customer support ticket resolution time from 48 hours to 4 hours

· Cut accounts receivable aging from 45 days to 15 days

· Increase proposal win rate from 22% to 35%

· Eliminate the 600 hours/month we spend manually categorizing expenses

Notice something? None of those mention AI.

They mention outcomes.

Once you know the outcome, you can work backwards to determine if AI is even the right tool.

Sometimes it's not. Sometimes you just need better process documentation. Or a simple automation script. Or a spreadsheet formula someone should have written three years ago.

But when you start with "we need AI," you're committed to using AI whether it makes sense or not.

The Five-Question Filter

Before you spend a dollar on AI implementation, run your target problem through these five filters:

1. Is it high-volume and repetitive?

AI excels at doing the same task thousands of times. If this is a monthly task, AI is overkill. If it's a 200-times-per-day task, keep reading.

2. Does it have clear success criteria?

Can you measure improvement objectively? "Better customer experience" is vague. "Response time under 30 seconds" is measurable.

If you can't define success in numbers, AI won't help you.

3. Is the existing process actually documented?

If your team can't explain the current workflow in a flowchart, AI can't learn it. You'll just automate chaos.

Document first. Automate second.

4. Do you have the data infrastructure?

Not "do you have data." Do you have:

· Clean, structured data in accessible systems

· Consistent field definitions across sources

· Documented data lineage

· Governance protocols already in place

If you answered no to any of these, fix your data problem first.

5. What happens if it breaks?

AI will make mistakes. What's the failure mode?

If the AI misroutes a support ticket, you lose 30 minutes. If it miscalculates loan terms, you face regulatory penalties and lawsuits.

High-stakes processes need human oversight loops. Low-stakes processes can run fully automated.

If you answered "yes" to questions 1-4 and you have a plan for question 5, AI might be the right tool.

If not, you'll burn six months and six figures on a pilot that goes nowhere.

What the Outcome-First Approach Actually Looks Like

Let's say you run customer success for a SaaS company. Support tickets are piling up. Resolution times are climbing. Customers are churning.

The Backwards Way:

1. Google "AI customer support tools"

2. Watch demos from five vendors

3. Pick the one with the slickest UI

4. Run a pilot with 20% of tickets

5. Discover it can't integrate with your CRM

6. Spend three months on integration

7. Discover it gives terrible answers because it wasn't trained on your docs

8. Spend three more months fine-tuning

9. Team is exhausted. Pilot ends. Nothing ships.

The Outcome-First Way:

1. Define the outcome: "Reduce average ticket resolution time from 48 hours to 4 hours for Tier 1 issues."

2. Run the five-question filter:

· High-volume? Yes—300 tickets/day

· Measurable success? Yes—resolution time in hours

· Process documented? Not yet—spend two weeks mapping the workflow

· Data infrastructure? Partial—CRM has ticket data, but knowledge base is a mess of Google Docs

· Failure mode? Low stakes—human agents can catch mistakes

3. Fix the foundation first:

· Consolidate knowledge base into structured docs

· Tag historical tickets by resolution type

· Document the escalation workflow

4. Then evaluate if AI is the right solution:

· Can it auto-respond to "password reset" tickets? Yes.

· Can it surface relevant docs to agents? Yes.

· Can it route complex issues to specialists? Yes.

5. Pick the tool based on your requirements—not the vendor's feature list.

6. Ship incrementally:

· Week 1: Auto-respond to password resets only

· Week 3: Expand to account questions

· Week 6: Add intelligent routing

· Week 10: Measure impact—did resolution time drop to 4 hours?

7. Result: Production in 10 weeks. Measurable ROI. Team sees the value.

The difference? You started with the outcome. Technology came last.

Red Flags You're Doing It Backwards

You're likely stuck in the tool-first trap if:

· You have an "AI budget" but no specific business outcome tied to it

· Leadership keeps asking "what are we using AI for?" and the answer changes every quarter

· You're running three pilots but can't articulate what success looks like

· The team can describe the AI's features but not the business problem it solves

· You're waiting for the "perfect" solution before shipping anything

· Your AI strategy deck has more vendor logos than outcome metrics

If any of these sound familiar, stop. Restart from the outcome.

The Path Forward

Most companies will keep doing AI backwards. They'll chase the hype, buy the tools, run the pilots, and wonder why nothing works.

You don't have to be one of them.

Start with the outcome. Work backwards. Ask the five questions. Fix your foundation. Choose tools based on fit, not features. Ship incrementally. Measure religiously.

That's not a strategy you'll find in a vendor pitch deck. But it's the strategy that actually works while 80% of your competitors burn budget on pilots that never ship.

The companies that win with AI aren't the ones with the biggest budgets or the fanciest tools.

They're the ones who asked the right question first.